The AI Threat Your Business Isn't Ready For

What the headlines won't tell you about generative AI, cybersecurity, and the risks hiding in plain sight

Key Takeaways

- Phishing is now perfect: AI-generated attacks are indistinguishable from legitimate communication—your training won't save you.

- Shadow AI is your biggest internal leak: Employees using public AI tools may be exposing sensitive data without knowing it.

- Old defenses don't catch behavior: Blocking IPs and signatures is yesterday's war—modern threats require behavioral detection.

- Frameworks exist to guide you: OWASP, NIST, and the EU AI Act provide actionable paths to AI governance.

Last month, a CFO at a mid-sized manufacturing company received a call from the CEO. "We need to wire $2.3 million for the acquisition we discussed. I'm sending the details now." The voice was unmistakable. The urgency was typical. The transfer was approved in 47 minutes.

The CEO had never made that call. The voice was synthetic—generated by AI from a few minutes of audio scraped from earnings calls and podcasts.

This isn't a hypothetical. This isn't "emerging technology." This is happening right now, to businesses just like yours.

And it's just one example of how artificial intelligence has fundamentally changed the rules of cybersecurity—not in some distant future, but today.

// THE NEW REALITY

For thirty years, cyber attacks followed a predictable pattern. Defenders studied these patterns, built defenses around them, and generally knew what to expect.

AI changed everything in eighteen months.

The same technology that powers your customer service chatbot and writes your marketing emails is now being used to craft attacks that your current defenses simply weren't built to detect.

The Numbers That Should Keep You Up at Night

But here's what those numbers don't capture: the complete transformation of how attacks happen, who can launch them, and why traditional defenses are failing.

How We Got Here: A Brief History of Digital Defense

To understand why AI changes everything, you need to understand what came before.

For decades, cyber attacks have followed a predictable sequence. Security professionals call it the Cyber Kill Chain—seven stages that every successful attack must complete. Think of it as a burglar's checklist: case the house, pick the lock, disable the alarm, grab the valuables, escape.

The beauty of this model? It gave defenders multiple opportunities to stop an attack. Break any link in the chain, and the whole operation fails.

This framework worked for thirty years. Not perfectly—but well enough that cybersecurity evolved into a mature, structured discipline with clear best practices and predictable threats.

Then generative AI arrived.

The Three Transformations You Need to Understand

AI hasn't just made attacks "better." It has fundamentally transformed three dimensions of cybersecurity risk:

1. The End of the Obvious Attack

Then

Phishing emails had obvious tells: broken English, generic greetings, suspicious urgency. Training employees to spot them actually worked.

Now

AI generates flawless, personalized messages that reference your current projects, mimic your colleagues' writing styles, and arrive at precisely the right moment.

What This Means for Your Business

That annual phishing training? It's no longer enough. When an email perfectly mimics your CEO's communication style, references a real project by name, and arrives while you're actually waiting for approval on that project—even security-conscious employees will click.

The "Nigerian prince" email is now a museum piece. The new attacks are indistinguishable from legitimate communication.

2. The Barrier to Entry Has Collapsed

Then

Launching sophisticated attacks required years of technical training, specialized tools, and significant resources. Serious threats came from organized groups.

Now

Someone with no coding experience can generate custom malware, craft convincing pretexts, and identify vulnerable targets—all by asking an AI the right questions.

The Uncomfortable Truth

You used to worry about sophisticated nation-state actors and organized criminal groups. Now you also need to worry about the disgruntled teenager with a ChatGPT subscription and a grudge.

The tools of digital destruction have been democratized.

3. The Target Is Now the AI Itself

This is the shift most businesses haven't grasped yet.

If your organization uses AI tools—and increasingly, every organization does—those AI systems themselves become attack targets. Not the servers they run on. Not the databases they access. The AI's reasoning itself.

Traditional Security

Strict separation between "instructions" (code) and "data" (information). You could protect each independently.

AI Security

Instructions and data blur together. An attacker can manipulate the AI's behavior simply by crafting the right input—no "hacking" required.

The OWASP Top 10: What Your AI Systems Are Vulnerable To

OWASP—the global nonprofit that sets security standards—has identified the most critical vulnerabilities in AI systems. If you're using or building AI applications, these are the risks you need to understand:

| Vulnerability | What It Means for Your Business |

|---|---|

| Prompt Injection | Attackers craft inputs that trick your AI into ignoring its rules. Imagine your customer service bot suddenly sharing confidential pricing or internal policies because someone asked it the "right" way. |

| Data Leakage | Your AI inadvertently reveals sensitive information in its responses—customer data, proprietary processes, or competitive intelligence it was trained on. |

| Training Data Poisoning | If attackers can corrupt the data used to train your AI, they can create hidden backdoors—like teaching the AI to automatically approve certain transactions or ignore specific red flags. |

| Overreliance | AI "hallucinations"—confident but completely wrong answers—get treated as fact, leading to flawed decisions, legal exposure, or customer harm. |

| Supply Chain Risks | The AI models and plugins you integrate may contain vulnerabilities you can't see or control. Do you know what's really inside the tools you're using? |

The Threat Inside Your Walls: Shadow AI

While you're focused on external attackers, there's a threat inside your organization that might be more immediate—and it's driven by your best employees.

The Shadow AI Problem

Your marketing lead pastes customer feedback into ChatGPT to draft a response. Your developer copies proprietary code into an AI assistant for debugging help. Your analyst uploads a confidential spreadsheet to an AI tool for visualization.

Each one may have just committed a data breach.

Shadow AI happens because:

- Good intentions: Employees genuinely want to be more productive

- Easy access: Public AI tools are powerful and free

- Invisible risk: There's no alarm when data leaves via a browser tab

- Policy gaps: Most organizations haven't addressed AI use

Your firewall can't stop it. Your antivirus won't detect it. It looks exactly like normal web browsing—because it is.

A Simple Policy Fix

The fastest way to address Shadow AI risk: Enterprise AI vs. Public AI.

Public tools (free ChatGPT, consumer Copilot, public Claude) may train on your data and lack enterprise controls. Enterprise versions (ChatGPT Enterprise, Microsoft 365 Copilot, Claude for Business) offer data isolation, audit logs, and contractual guarantees that your information won't be used for training.

The policy is simple: use company-approved enterprise AI tools for any work involving internal data. Public tools are fine for general research—but the moment sensitive information is involved, switch to the enterprise version.

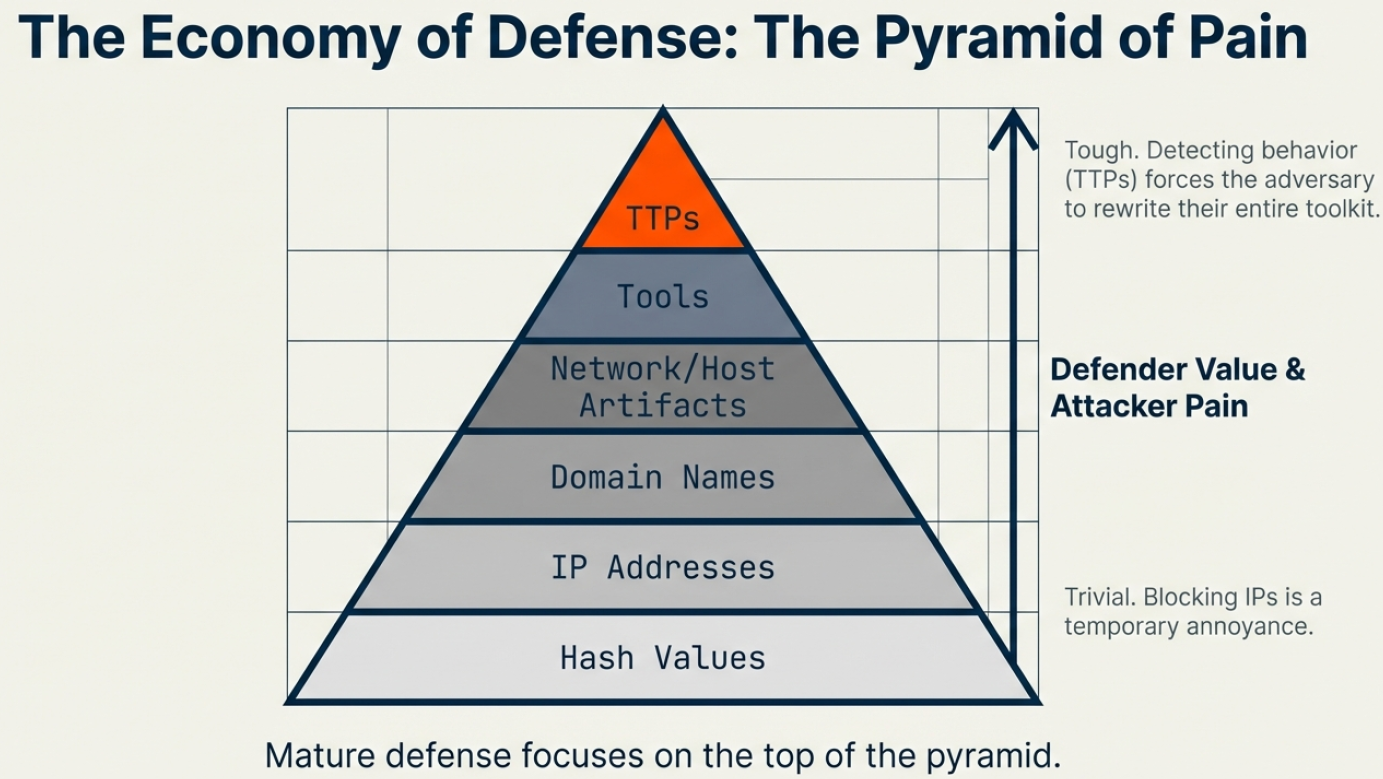

The Pyramid of Pain: A Smarter Defense Strategy

Here's the concept that separates mature security programs from reactive ones.

Not all defenses are equal. Some actions cause attackers minor inconvenience; others force them to completely rebuild their approach. The Pyramid of Pain ranks your defensive options by how much difficulty they create for attackers:

The Pyramid of Pain: Focus your defenses on behaviors (TTPs) at the top, not easily-changed indicators at the bottom.

The bottom line: If your security program focuses on blocking known bad IP addresses and file signatures, you're fighting yesterday's war. Modern defense requires detecting behavior—the patterns that define how attackers operate, not just the specific tools they happen to use today.

The Frameworks That Can Guide You

You don't have to figure this out alone. Several established frameworks provide guidance for managing AI and cyber risk:

NIST AI Risk Management

The U.S. government's comprehensive framework for trustworthy AI development, including testing, evaluation, verification, and validation protocols.

OWASP Top 10 for LLMs

The definitive list of AI-specific vulnerabilities—the security community's consensus on what can go wrong.

MITRE ATT&CK + ATLAS

Mapping traditional attack techniques alongside AI-specific threats for comprehensive, behavior-based defense.

EU AI Act

Emerging regulations requiring transparency, documentation, and risk assessment for AI systems. Compliance is becoming non-optional.

The Critical Question: Where Do You Stand?

Every business leader needs to understand the gap between their current security posture and where they need to be. This isn't about fear—it's about informed decision-making.

Honest Self-Assessment

Do we have visibility into which AI tools our employees are actually using?

Have we established clear, practical policies for AI use with sensitive data?

Are our defenses built to detect attacker behavior, or just known threats?

Do we understand the AI components embedded in our vendor applications?

Have we assessed our AI systems against the OWASP Top 10 vulnerabilities?

Is our incident response plan updated for AI-enhanced attack scenarios?

Do we maintain a "Software Bill of Materials" for our AI tools?

Are we tracking emerging regulatory requirements (EU AI Act, state laws)?

If you answered "no" or "I don't know" to more than two of these questions, you have a gap that needs attention.

And that gap represents real risk—not theoretical, someday risk, but risk that could materialize tomorrow morning as a phone call from your bank, your lawyer, or your largest customer.

The Path Forward

The AI-driven threat landscape is real, but so is your ability to respond. The organizations that thrive won't be the ones who ignore these risks or panic about them—they'll be the ones who take measured, informed action.

Start with visibility. Move to policy. Build toward behavioral defense. Integrate AI governance into your existing risk management.

The goal isn't perfect security. It's understanding your risk and making smart decisions about how to manage it.

Free Generative AI Assessment

A comprehensive review of your organization's AI exposure: safeguards for data and privacy, compliance with emerging regulations, and practical recommendations for closing the gap between your present state and a risk-optimized future.

Hudson Valley CISO

A Division of Security Medic Consulting

Fractional CISO Services | AI Governance | Cybersecurity Strategy